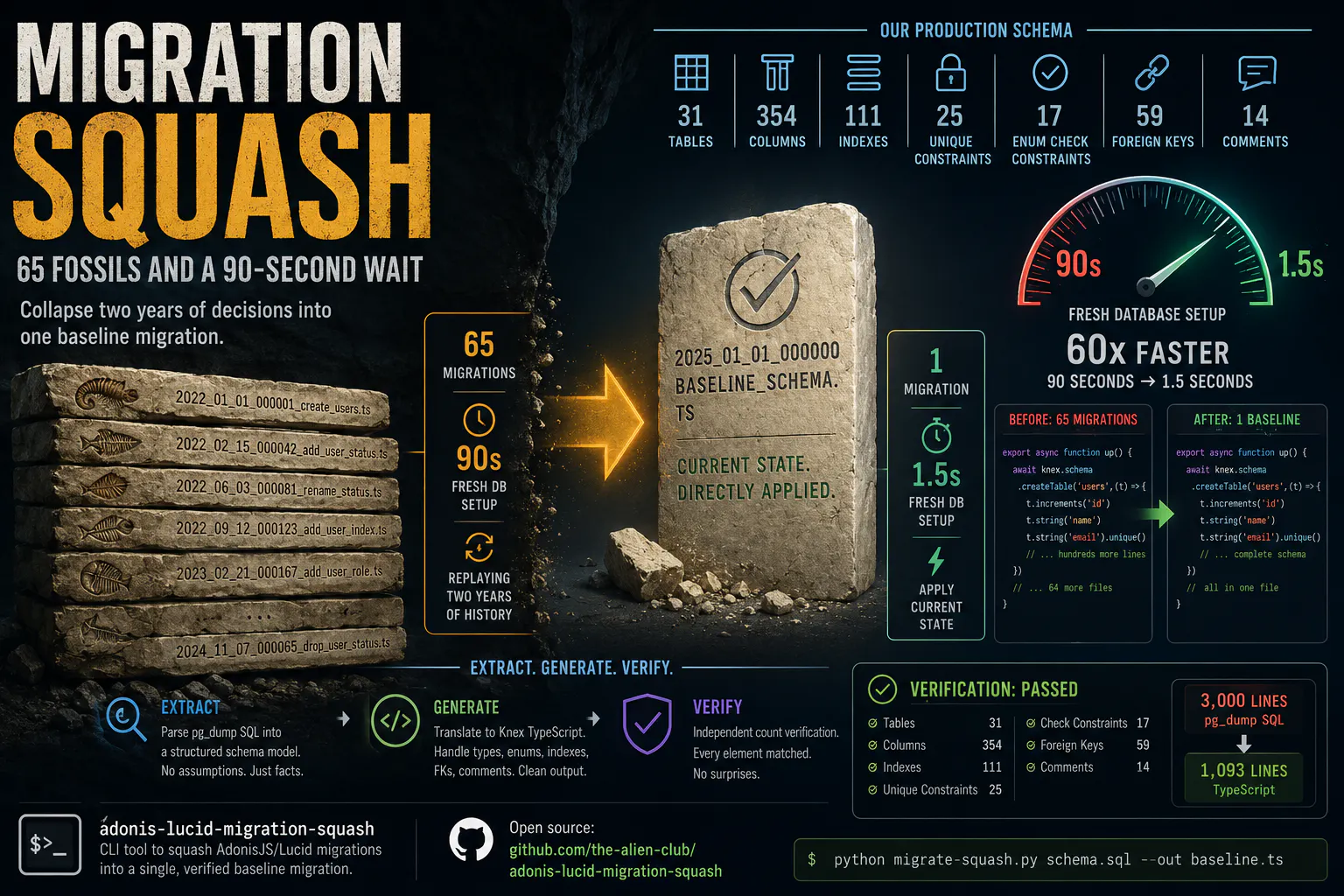

Adonis JS Migration Squash

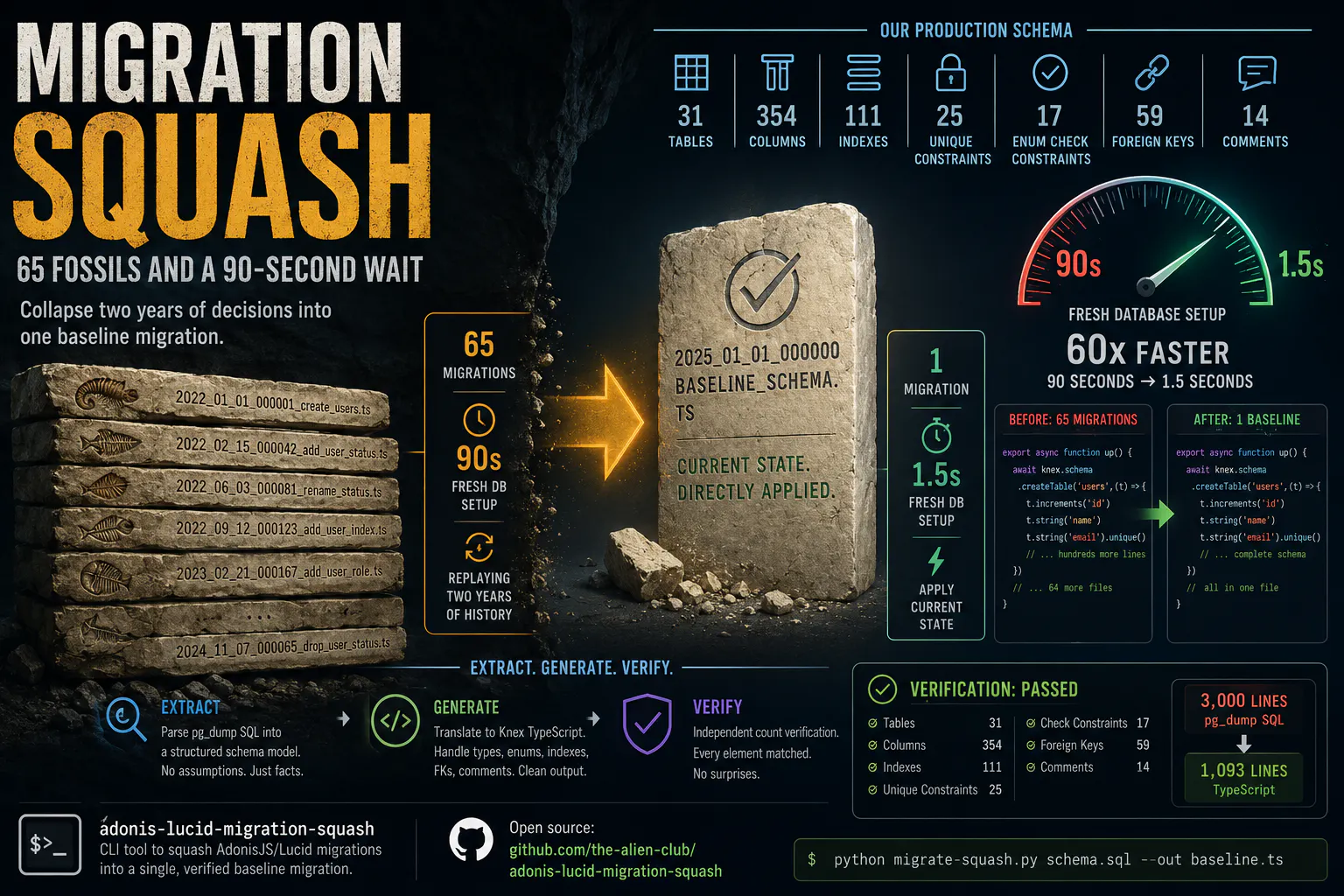

65 Fossils and a 90-Second Wait

Every decision leaves a trace.

AdonisJS/Lucid uses timestamped migration files. Each one is atomic, reversible, auditable. The convention is good. You add a column: one migration. You rename it six months later: another migration. You add an index a year after that: a third. Each decision is a permanent entry in the migration directory, waiting to be replayed.

This is not a flaw. Migrations as an append-only log is the right design. You want to know that every database in every environment followed the same sequence of transformations. You want rollback. You want auditability.

The problem appears at the other end of time. Two years into a project, sixty-five decisions live in that directory. Some of them contradict earlier decisions. Some of them were mistakes corrected a month later. The column added in migration 12 was renamed in migration 34, had an index added in migration 47, and was dropped entirely in migration 58. That entire story is encoded in the directory, and every time you spin up a fresh database, Knex replays all of it.

Ninety seconds.

Not because the schema is large. Because there are sixty-five files and Knex runs them sequentially.

Ninety seconds per fresh environment. Ninety seconds per new developer onboarding. Ninety seconds per CI pipeline that spins up a clean test database. Ninety seconds every time you bisect a bug and need to reset.

The history is there for humans to understand decisions. The machine doesn't need the history. The machine needs the current state.

The Squash Problem

Migration squashing — collapsing history into a single baseline migration that represents current schema state — is the accepted solution. The AdonisJS documentation mentions it. The tooling to do it reliably does not exist in the ecosystem.

The naive approach is to write the baseline by hand. Export the schema from your database, read through it, manually translate each table and index into Knex syntax. For a small schema this is tedious but tractable. For a schema with thirty-one tables, three hundred and fifty-four columns, a hundred and eleven indexes, fifty-nine foreign keys, seventeen enum-style check constraints, and fourteen column comments, it is several days of careful, error-prone work.

The automated approach requires solving several problems:

PostgreSQL's pg_dump output is SQL. Knex migrations are TypeScript. The translation is not trivial. PostgreSQL types do not map one-to-one to Knex methods. timestamp with time zone and timestamp without time zone are different in meaningful ways. character varying(255) has a length that must be preserved. numeric(10,2) has precision and scale. Array types (text[]) have no native Knex equivalent and need specificType(). Each case requires a decision.

PostgreSQL's CHECK constraints are used for enum emulation — CONSTRAINT user_status_check CHECK (status = ANY(ARRAY['active', 'inactive', 'suspended'])) — and need to be recognized and translated to Knex's table.enu() instead of a raw check constraint.

The down() method must be correct. Not approximately correct. A baseline migration that drops tables in the wrong order, leaving foreign key references dangling, is worse than no baseline at all.

And most importantly: the output must be verifiable. A squash that silently drops three foreign keys is a disaster waiting to happen. You will not notice it until a query returns data it should not return, or a constraint violation surfaces in production that was never caught in development because the constraint was never written.

Extract, Generate, Verify

adonis-lucid-migration-squash is a Python command-line tool. No dependencies. Pure standard library. You give it a pg_dump SQL file. It gives you a Knex TypeScript migration.

The architecture is a strict pipeline: extract, generate, verify.

The extraction layer is a family of single-responsibility parsers. TableExtractor walks CREATE TABLE blocks, parses column definitions, detects primary keys, strips PostgreSQL type casts from default values, and identifies CHECK-based enum constraints on a first pass before parsing columns on a second. IndexExtractor parses CREATE INDEX statements. ForeignKeyExtractor parses ALTER TABLE ... ADD CONSTRAINT ... FOREIGN KEY blocks with their ON DELETE behaviors. Each extractor works on the raw SQL text via regex. Each produces a typed dataclass.

The generation layer translates the extracted model into Knex syntax. The type mapper handles all the edge cases — length-preserving string types, precision-preserving numerics, timezone-aware timestamps, array types that fall through to specificType(). Indexes, unique constraints, and foreign keys for the same table are grouped into a single alterTable() call rather than one per constraint, which halves the output size.

The verifier runs after parsing and before any file is written. It is not the parser checking its own work. It is a separate code path that opens the original SQL file independently, re-counts every element category — tables, columns, indexes, foreign keys, unique constraints, check constraints — using its own regex patterns, and diffs those counts against what the parser produced. If the counts do not match, you know before anything touches disk.

This is the part we cared most about. Squashing is an operation with no margin for silent failure. The schema you end up with must be byte-for-byte equivalent to the schema you started with. The verifier is the guarantee that the translation did not lose anything.

The Numbers

Our production schema: 31 tables, 354 columns, 111 indexes, 25 unique constraints, 17 enum-style check constraints, 59 foreign keys, 14 column comments. Three thousand lines of pg_dump SQL built up over two years.

Output: 1,093 lines of clean TypeScript. One migration file.

Schema diff against the original pg_dump: empty. The resulting Knex migration, when applied to a fresh database, produces a schema identical to the original.

Fresh database setup time: 90 seconds to 1.5 seconds.

The history still exists. The git log still shows every decision. But the machine no longer has to replay two years of decisions to arrive at the current state. It applies the current state directly.

The best migrations are the ones that never existed in the first place. The second best are the ones collapsed into a baseline no one has to think about again.